WEEK 05-2026 AI 3X3 BRIEF

TL;DR: Sysdig caught an attacker using AI to go from stolen credentials to full admin control of an AWS environment in eight minutes flat. Zscaler's latest data shows enterprises pumped 18,033 terabytes into AI applications last year—a 93% jump—while ChatGPT alone racked up 410 million data loss prevention violations. And the White House just ripped up the Biden-era software security requirements while states keep piling on their own AI laws, leaving every business stuck in regulatory limbo.

🚨 DEVELOPMENT 1

8-Minute AWS Takeover: AI-Assisted Attacks Are Here

What Happened

Sysdig's Threat Research Team published details of an attack observed on November 28, 2025. An attacker went from zero to full admin access in an AWS environment in eight minutes.

The entry point: valid credentials sitting in public S3 buckets named using common AI tool conventions. The attacker used LLMs to automate reconnaissance, generate malicious Python code (with comments in Serbian, suggesting the operator's origin), and inject it into an existing Lambda function. Three iterations later, they had admin keys.

From there, lateral movement across 19 AWS principals, data exfiltration from Secrets Manager and CloudWatch, then a pivot to LLMjacking—running up compute costs on Amazon Bedrock models including Claude, DeepSeek R1, and Amazon Titan.

Why It Matters

→ AI didn't create the vulnerability. It compressed the timeline. As Keeper Security's CISO Shane Barney put it: "AI doesn't invent new attack vectors here. It removes hesitation." The credentials were the real problem. AI just made sure the attacker didn't waste a second once they had them.

→ The AI left fingerprints—for now. Sysdig flagged "hallucinated" AWS account IDs and references to GitHub repos that don't exist. LLMs generating attack code sometimes make things up. But those sloppy artifacts will get cleaned up as offensive tools improve.

→ LLMjacking is becoming standard practice. Once inside, the attacker immediately checked whether Bedrock's model invocation logging was disabled—then started running up charges on AI models. Stolen cloud accounts aren't just about data anymore. They're about compute.

Enterprise: This is a credential management story before it's an AI story. Audit your S3 buckets. Enforce least privilege on Lambda execution roles. Enable Bedrock model invocation logging.

SMB: If you're running workloads in AWS or any cloud, ask your team: "Do we have any credentials in storage buckets?" If nobody can answer immediately, you have a problem.

Action Items:

Scan for exposed credentials in all cloud storage—especially buckets tied to AI workloads

Restrict Lambda permissions (UpdateFunctionCode, PassRole) to only the identities that genuinely need them

Enable model invocation logging on Amazon Bedrock and set up alerts for unauthorized model usage

🔴 DEVELOPMENT 2

Zscaler: Your AI Tools Are Hemorrhaging Corporate Data

What Happened

Zscaler's ThreatLabz team released the 2026 AI Security Report on January 27, analyzing nearly one trillion AI and ML transactions from about 9,000 organizations throughout 2025.

Enterprises sent 18,033 terabytes of data to AI applications last year—a 93% increase. ChatGPT alone received 2,021 terabytes across 115 billion transactions. Codeium logged 42 billion more. Enterprise AI activity grew 91% year over year across more than 3,400 applications—four times more than the year before.

A lot of that data shouldn't have been there. ChatGPT triggered 410 million data loss prevention violations, including attempts to share Social Security numbers, source code, and medical records. ThreatLabz warns that these platforms have quietly become "the world's most concentrated repositories of corporate intelligence"—and prime targets for espionage.

The bigger issue isn't ChatGPT, though. It's embedded AI—features baked into SaaS platforms like Atlassian and Salesforce that are often enabled by default and invisible to legacy security filters. As Riaan Gouws, CTO of Forward Edge-AI, told TechNewsWorld: "Your employees aren't choosing to use AI. AI is just happening in the background of tools they already use. You can't solve it by blocking ChatGPT at the firewall. The AI is already inside the applications you sanctioned."

Why It Matters

→ Blocking isn't working. Enterprises blocked 39% of all AI access attempts in 2025. But Zscaler found that when organizations block AI tools, users shift to unsanctioned alternatives, personal accounts, or embedded features inside approved platforms—often with less visibility and fewer controls. The problem moves sideways.

→ Embedded AI is different from shadow AI. Shadow AI is an employee installing ChatGPT on their own. Embedded AI is Salesforce or Atlassian rolling out AI features that activate by default inside tools IT already approved. Your security filters don't flag them because they look like normal SaaS traffic.

→ The data is concentrated. 18,033 terabytes—roughly 3.6 billion digital photos worth—funneled into a handful of platforms: ChatGPT, Grammarly, Microsoft Copilot, Codeium. If an attacker compromises one of those platforms, they don't need to breach your network. They already have your data.

Enterprise: Inventory time. Not just standalone AI tools—which SaaS platforms in your stack have embedded AI features running right now? Which ones activated by default without anyone reviewing data handling?

SMB: You can't block your way out of this. Your team is using Grammarly, ChatGPT, and a dozen AI features inside tools you already pay for. Find out what's running, set clear guidelines on what data goes where, and enforce them.

Action Items:

Audit embedded AI features in your existing SaaS stack—many activate by default and process data without IT's knowledge

Update DLP policies specifically for AI application traffic—most existing rules weren't built for this

Shift from "block everything" to governed enablement—blocking pushes users toward less visible alternatives

FROM OUR PARTNERS

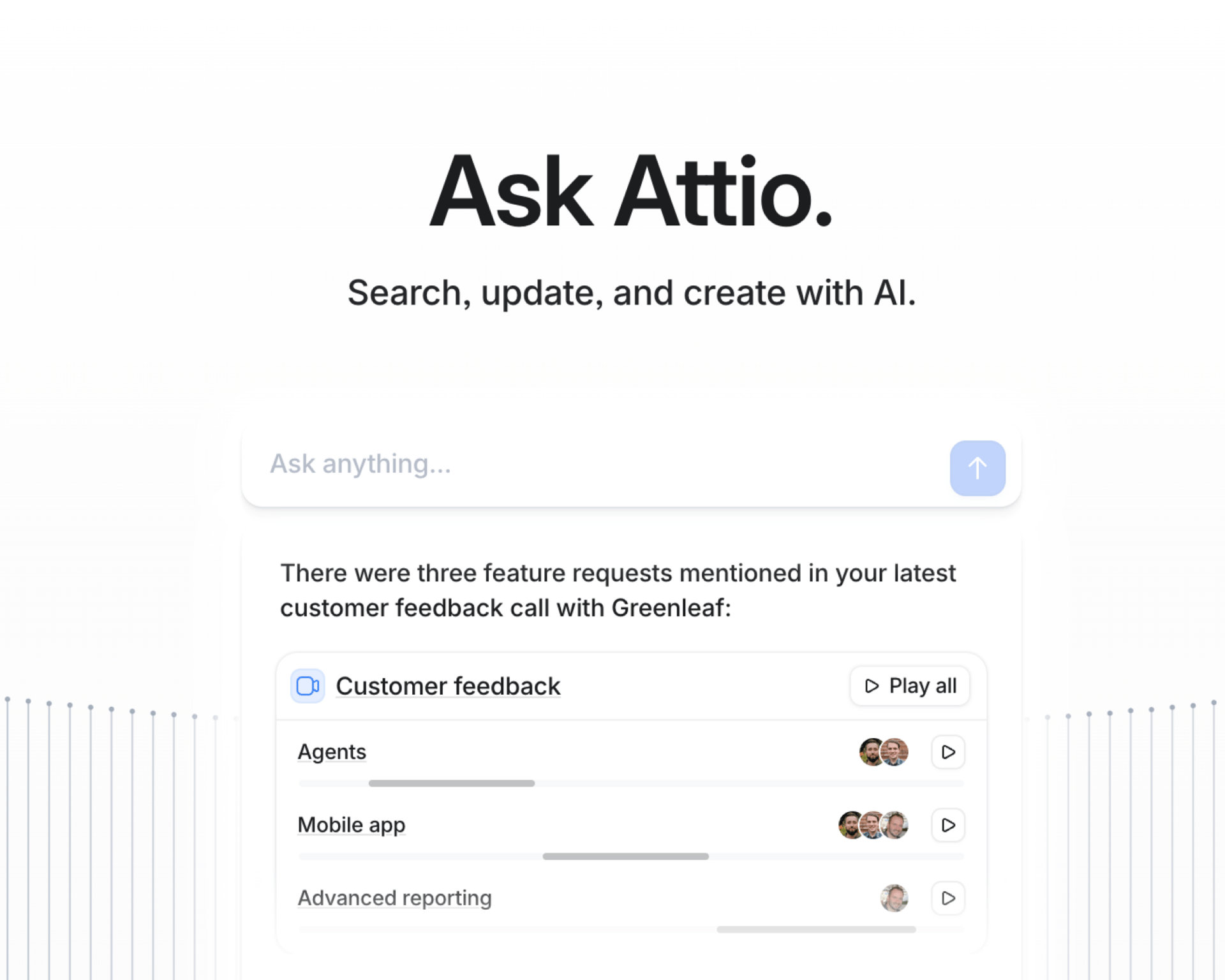

Attio is the AI CRM for modern teams.

Connect your email and calendar, and Attio instantly builds your CRM. Every contact, every company, every conversation, all organized in one place.

Then Ask Attio anything:

Prep for meetings in seconds with full context from across your business

Know what’s happening across your entire pipeline instantly

Spot deals going sideways before they do

No more digging and no more data entry. Just answers.

📊 DEVELOPMENT 3

The AI Regulation Mess: Federal Rollback Meets State Pile-On

What Happened

On January 23, the White House's Office of Management and Budget issued Memorandum M-26-05, rescinding two Biden-era directives (M-22-18 and M-23-16) that required federal agencies to get software security attestations from vendors. OMB Director Russell Vought called them "unproven and burdensome software accounting processes that prioritized compliance over genuine security investments."

Agencies can still use attestation forms and SBOMs voluntarily. They're just not required to. Each agency head now sets their own assurance policies.

Meanwhile, the states keep adding requirements. California, Texas, and Illinois all have AI laws that took effect January 1. Colorado's AI Act kicks in June 30. And a December 2025 executive order established a DOJ AI Litigation Task Force to challenge state laws the administration considers "onerous"—while conditioning federal broadband funding on states avoiding aggressive AI regulation.

The net result, as InformationWeek reported: businesses are stuck between state requirements that keep expanding and federal requirements that keep disappearing.

Why It Matters

→ The federal floor just got pulled out. Whether you thought the attestation requirements were useful or theater, they were a baseline. Tim Mackey of Black Duck noted that this strips out key software assurance elements, leaving zero trust and SBOMs as the only remaining pillars—and SBOMs are now optional.

→ States are filling the vacuum inconsistently. At least 18 state privacy laws are in effect or went live January 1, 2026. Some require bias audits. Others mandate opt-outs from automated decisions. Colorado targets "algorithmic discrimination." If you operate in multiple states, you're juggling conflicting requirements with no federal standard to anchor against.

→ Regulatory uncertainty has a real price tag. As one CISO told InformationWeek, the cost shows up in "legal fees, forced model/feature rollback, and class-action style discovery that becomes a forensic audit of your data and decisioning."

Enterprise: Build to the strictest standard across your operating jurisdictions. More expensive upfront, cheaper than retroactive compliance when a state AG comes knocking. Watch March 2026: Commerce Department evaluation and FTC policy statement are both due.

SMB: You don't need to track every state law. You do need a basic AI governance framework—data access controls, an inventory of what tools touch customer data, and a written policy on employee AI use. That foundation holds regardless of which direction the rules go.

Action Items:

Build compliance to the strictest applicable standard—don't wait for federal clarity that may never come

Calendar March 2026: Commerce Department evaluation and FTC AI policy statement will reshape the landscape

Document your AI governance practices now—if enforcement ramps up, you'll want a paper trail showing you were proactive

💡 FINAL THOUGHTS

Your Key Takeaway:

No surprises here. AI moves fast, it moves a lot of data, and most organizations are still catching up on both fronts.

If you take one thing from this week: work with your security and governance teams proactively. Stay in the loop on what tools are running, what data is flowing where, and what controls are actually in place. Getting ahead of this would be ideal—but it's a tough ask without stifling the innovation that makes AI worth adopting in the first place.

The balance is hard. But waiting until something breaks is harder.

Need help with AI Governance?

Check out → DigiForm-AI-Governance

How helpful was this week's email?

We are out of tokens for this week's security brief. ✋

P.S: How long would it take someone to get admin access in your cloud environment? Honestly—do you know? Hit reply. We're building a benchmark piece and want to hear from real operators.

Keep reading, learning and be a LEADER in AI 🤖

Hashi & The Context Window Team!

Follow the author:

X at @hashisiva | LinkedIn