STAT WORTH SHARING:

The most popular open-source LLM proxy — 97 million monthly downloads — was shipping stolen credentials to attackers for three hours last Monday. The entry point wasn't LiteLLM itself. It was Trivy, a vulnerability scanner running inside LiteLLM's own build pipeline.

If someone on your leadership team needs to see this, forward it their way.

TL;DR:

A threat group called TeamPCP compromised a vulnerability scanner, used it to steal publishing credentials, and backdoored LiteLLM — the open-source library that connects apps to every major LLM provider. CISA flagged a critical Langflow vulnerability after attackers weaponized it within 20 hours of disclosure, no proof-of-concept needed. HUMAN Security's latest report found that automated traffic now grows eight times faster than human traffic, with AI agent activity up nearly 8,000% year over year.

Development 1: TeamPCP Turned a Security Scanner Into a Supply Chain Weapon

What Happened

On March 24, a threat group called TeamPCP published backdoored versions of LiteLLM — versions 1.82.7 and 1.82.8 — on PyPI. LiteLLM is a Python library that acts as a universal proxy between applications and LLM providers like OpenAI, Anthropic, and AWS Bedrock. It handles roughly 97 million downloads per month. The malicious packages deployed a credential stealer that went after SSH keys, cloud tokens, Kubernetes secrets, environment variables — basically anything sensitive on the machine — and shipped it all to an attacker-controlled server.

The packages were live for about three hours before PyPI quarantined the entire project. The real story is how TeamPCP got the publishing credentials in the first place. They compromised Aqua Security's Trivy vulnerability scanner on March 19 by rewriting Git tags to point to a malicious release. On March 23, they hit Checkmarx's GitHub Actions using the same infrastructure. LiteLLM's CI/CD pipeline ran Trivy without a pinned version — so when it executed, it pulled the compromised scanner, which quietly exfiltrated LiteLLM's PyPI publishing credentials. From there, TeamPCP could publish whatever they wanted.

CISA added the compromise to its Known Exploited Vulnerabilities catalog on March 25 under CVE-2026-33634. When community members tried to flag the issue on GitHub, the attackers flooded the thread with 88 bot comments from 73 compromised accounts in under two minutes, then used the hijacked maintainer account to close the issue as "not planned."

Why It Matters

→ The security tool was the entry point. TeamPCP didn't need to find a vulnerability in LiteLLM. They compromised Trivy — a tool built to scan for vulnerabilities — and used it to steal publishing credentials. Your vulnerability scanner just became the vulnerability.

→ Five days of warning went nowhere. Trivy's compromise was publicly disclosed on March 19. LiteLLM got hit on March 24. That's five days where rotating credentials would have prevented the entire downstream attack. It didn't happen. Most organizations using open-source AI tooling in their build pipelines probably wouldn't have caught it either.

→ This wasn't a random target. LiteLLM proxies between applications and multiple LLM providers, which means a single compromised instance can expose API keys for OpenAI, Anthropic, AWS, and every other connected service at once. Datadog's analysis confirmed the credential haul from one compromised host was far broader than what you'd get from a typical application.

Large enterprises running LiteLLM through the official Docker image with pinned dependencies were not affected — that deployment path didn't pull from the compromised PyPI packages. But if your engineering teams install Python packages via pip without pinning versions, or your CI/CD pipelines grab dependencies on the fly, this is exactly the kind of attack that hits you. Smaller organizations running AI prototypes tend to be looser about this stuff, which is why they're the ones most likely to get caught.

→ One action this week: Run pip show litellm | grep Version across every Python environment — developer machines, CI runners, Docker images, staging, production. If 1.82.7 or 1.82.8 was installed at any point on March 24, assume full credential compromise for that machine and everything reachable from it.

Development 2: Langflow Vulnerability Exploited in 20 Hours — Attackers Didn't Need a PoC

What Happened

On March 17, a critical vulnerability in Langflow — the open-source visual framework for building AI agent workflows — went public. Tracked as CVE-2026-33017 with a CVSS score of 9.3, it's an unauthenticated remote code execution flaw. One HTTP POST request is all it takes. No credentials needed.

Within 20 hours of the advisory going live, Sysdig's Threat Research Team observed the first exploitation attempts in the wild. No public proof-of-concept code existed. Attackers built working exploits directly from the advisory description and started scanning for exposed instances. By the 24-hour mark, they were harvesting .env and .db files from compromised Langflow installations.

CISA added CVE-2026-33017 to its Known Exploited Vulnerabilities catalog on March 25 and gave federal agencies until April 8 to patch or stop using the product entirely. Langflow has over 145,000 GitHub stars — it's a popular drag-and-drop tool for building RAG pipelines and AI agent workflows, which means a lot of data science teams have instances running somewhere.

Why It Matters

→ The patch window has collapsed to hours. Twenty hours from advisory to exploitation, with no PoC available. Attackers read the advisory description, built working exploits from it, and started scanning. If you're still patching on a monthly cycle, internet-facing AI tooling is the place that breaks first.

→ Langflow instances are treasure chests. Most of them have API keys for OpenAI, Anthropic, AWS, and database connections baked right in. Sysdig noted that compromising a single instance hands attackers lateral access to cloud accounts and connected data stores — so this isn't about losing one server. It's about everything that server talks to.

→ AI dev tools don't follow production security norms. Langflow's vulnerable endpoint was designed to be unauthenticated — it serves public flows. That's fine for demos. It's a disaster when those demos sit on the open internet with real credentials configured, which is exactly what Sysdig found happening.

If anyone on your team is running Langflow, update to version 1.9.0 immediately and change all the account passwords and service credentials connected to it — even if you don't think you were affected. If you're not sure whether Langflow is in your environment, that's the more urgent conversation to have.

One action this week: Ask your engineering and data teams what AI workflow or automation tools they're running — Langflow and similar platforms are typically set up without IT involvement. If you find any, update them and change the credentials attached to them.

Do you actually know what AI tools and frameworks your engineering teams are running right now?

Even "not really" is a useful answer — hit reply with a one-liner on where you are.

FROM OUR PARTNERS

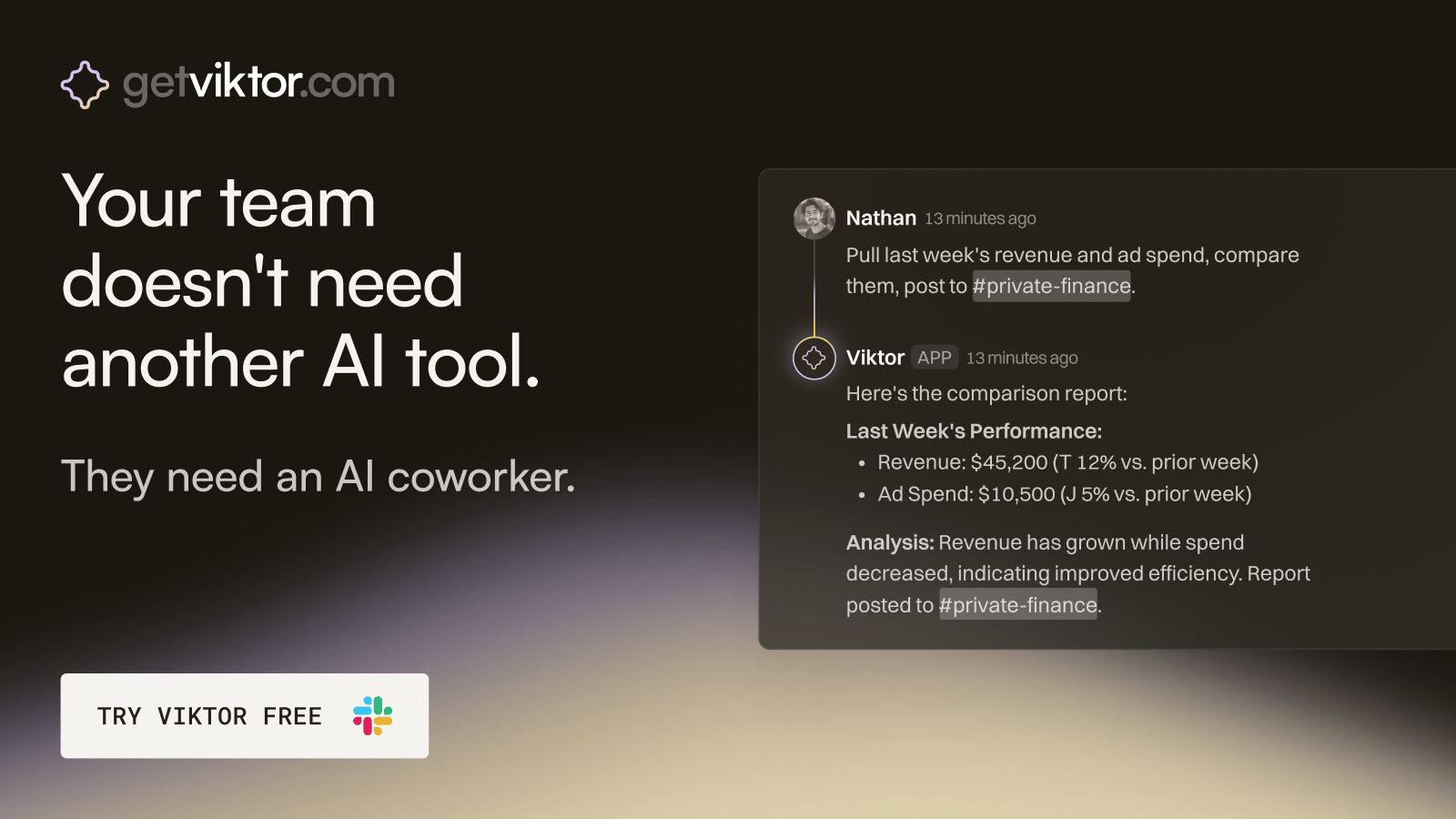

Check out Viktor - our partners on today’s newsletter. Viktor isn’t another AI agent but an AI coworker that you can bug 24/7/365.

The ops hire that onboards in 30 seconds.

Viktor is an AI coworker that lives in Slack, right where your team already works.

Message Viktor like a teammate: "pull last quarter's revenue by channel," or "build a dashboard for our board meeting."

Viktor connects to your tools, does the work, and delivers the actual report, spreadsheet, or dashboard. Not a summary. The real thing.

There’s no new software to adopt and no one to train.

Most teams start with one task. Within a week, Viktor is handling half of their ops.

Development 3: Bots Officially Outnumber Humans Online

What Happened

HUMAN Security released its 2026 State of AI Traffic & Cyberthreat Benchmark Report on March 26, drawing on more than one quadrillion digital interactions processed through its defense platform in 2025. The top-line number: automated internet traffic grew 23.5% year over year — roughly eight times faster than human traffic, which grew just 3.1%.

AI-driven traffic specifically surged 187% over the course of 2025, nearly tripling. The fastest-growing slice was agentic AI — autonomous systems that actually do things on the web, not just read it — which grew 7,851% year over year. At SXSW earlier this month, Cloudflare CEO Matthew Prince predicted bot traffic will surpass human traffic entirely by 2027.

More than 95% of AI-driven automation is concentrated in three industries: retail and e-commerce, streaming and media, and travel and hospitality. Post-login account compromise attempts more than quadrupled year over year, averaging 400,000 per organization.

Why It Matters

→ "Bot or human" is the wrong question now. A legitimate AI shopping agent and an automated fraud script look almost identical in your traffic logs. Across HUMAN's entire dataset, the behavioral gap between benign and malicious automation was half a percentage point. The security question is no longer "is this a bot?" — it's "does this bot deserve trust?" That's a much harder problem.

→ Your customers are sending agents, not browsers. AI agent traffic grew nearly 8,000% in a single year. These agents are browsing product pages, logging into accounts, and completing checkout — the same flows your fraud tools are monitoring. If your detection flags all non-human traffic as suspicious, you're going to start blocking real customers.

→ Three companies control most of the AI traffic hitting your infrastructure. OpenAI's bots account for roughly 69% of all observed AI-driven traffic. Meta contributes 16%, Anthropic about 11%. Your access policy decisions about a handful of providers now determine the majority of your AI traffic exposure.

If you're running bot management or fraud detection, ask your vendor a simple question: can it distinguish between a customer's AI shopping agent and an attacker's automated script? If the answer is vague, that's a problem. For smaller organizations, the immediate concern is less about AI agents and more about the surge in account takeover attempts — MFA and rate limiting have never mattered more.

→ One action this week: Pull your web traffic analytics for the past 90 days and look at the trend in non-human traffic. If you can't tell how much of your traffic is bots, start there.

💡 FINAL THOUGHTS

The AI infrastructure is moving faster than the security around it, and this week was just another example.

If someone in your organization needs to be reading this brief, it's probably the person making AI tool decisions without a security lens. Forward it their way.

How helpful was this week's email?

We are out of tokens for this week's security brief. ✋

- Hashi

Follow the author:

X at @hashisiva | LinkedIn